What is Interpretability?

Learn how an algorithm is deemed to be interpretable, and how it is different to being explainable

What does it mean to be interpretable?

Models are interpretable when humans can readily understand the reasoning behind predictions and decisions made by the model.

The more interpretable the models are, the easier it is for someone to comprehend and trust the model.

Models such as deep learning and gradient boosting are not interpretable and are referred to as black-box models because they are too complex for human understanding. It is impossible for a human to comprehend the entire model at once and understand the reasoning behind each decision.

What makes a model interpretable?

Interpretability is not simply a binary determination, as it can also depend on the complexity of the particular model in question. For instance, a linear regression that uses five features is significantly more interpretable than one using 100 features.

Interested to learn more? The National Institute for Standards and Technologies (NIST) thoroughly discusses different types of interpretability in their reference white paper on Explainable AI.

Illustrative decision tree for marketing decisions in investment management. The logic of the tree is clear and transparent.

How is it different from model explainability?

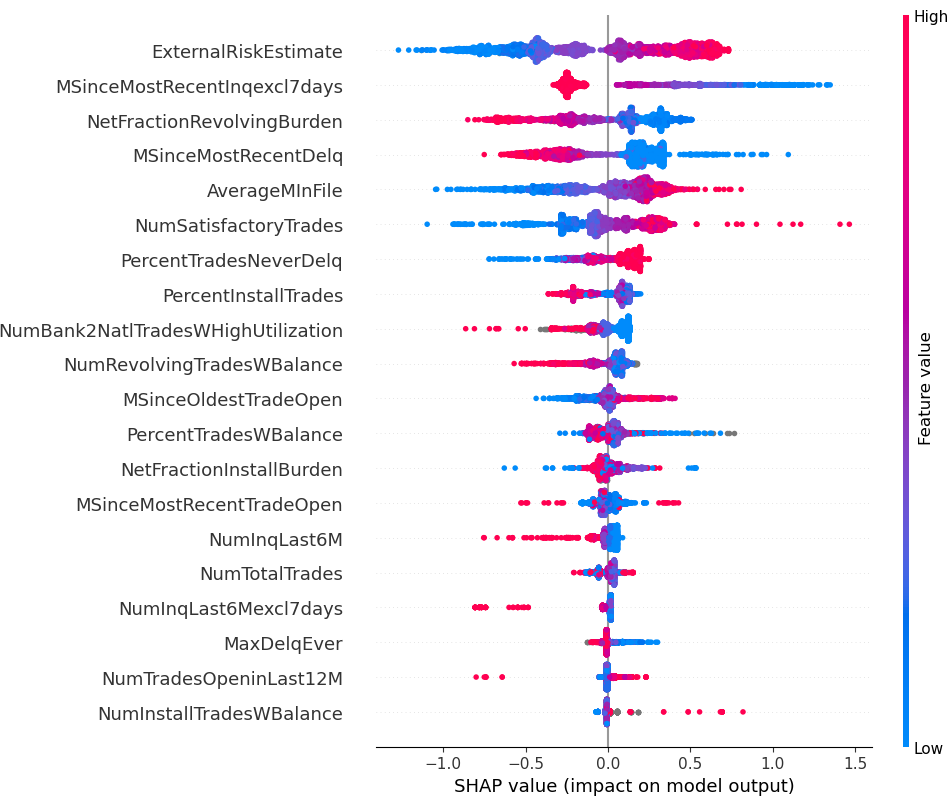

Compared to natively interpretable models, these explainable tools cannot give a global view of the model holistically and accurately. We compare and contrast the approaches in a case study on predicting risk of loan default.

In a recent paper, we show more comparisons between these approaches in the context of feature selection.

Example of SHAP output for predicting risk of loan default

Overcoming the price of interpretability

Our proprietary algorithms generate interpretable models that reach the same level of performance as black-box methods, demonstrated by extensive benchmark studies published in the top academic journals.

A large-scale benchmark study demonstrates that the performance of our Optimal Decision Trees (OCT-H and OCT) is competitive with black-box methods (XGBoost and Random Forest)

Ready to learn how interpretability can help?

Learn about the key benefits interpretability can bring to all stages of the data science process